SDLC ABM

Python Typescript SW Development Complexity Theory Agent Based Models

Forecast the impact of organizational changes before you make them.

Summary

SDLC SimLab is an agent-based modeling platform that simulates software development team dynamics. It represents developers — both human and AI — as autonomous agents with realistic communication patterns, productivity rates, and quality profiles, letting engineering leaders model “what-if” scenarios with quantitative rigor.

The Problem

Engineering organizations face high-stakes decisions with limited data:

- Scaling teams doesn’t produce linear output gains — communication overhead and onboarding costs create diminishing returns.

- Scope and schedule changes ripple unpredictably through teams.

- Resource allocation tradeoffs between team size, timeline, and quality are difficult to quantify.

- AI augmentation introduces new variables — review burden, cost structures, and human/AI ratios — with no established playbook.

How It Works

- Configure your team — Define agents with experience levels, productivity rates, code quality, review capacity, and specializations. Mix human and AI developers.

- Set the scenario — Choose communication models, team structure, timeline, and scope. Import historical data from GitHub, GitLab, or CSV for calibration.

- Run simulations — Execute single runs or Monte Carlo simulations (100–10,000 iterations) to generate confidence intervals.

- Compare outcomes — Evaluate scenarios side-by-side across throughput, quality, cycle time, risk, and cost metrics.

- Optimize — Use built-in optimization to find the best team configuration for your objectives and constraints.

Key Capabilities

- Agent-Based Modeling — Each developer is a distinct agent with unique attributes, not an average.

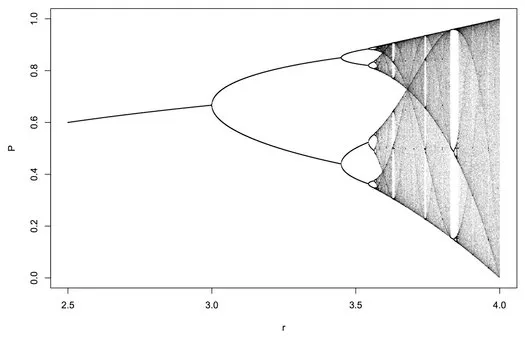

- Lossy Communication Modeling — Realistic information degradation across team sizes and structures (linear, quadratic, or hierarchical scaling).

- Human + AI Team Simulation — Model mixed teams with asymmetric review flows, different cost structures, and 24/7 AI availability.

- Monte Carlo Analysis — Generate statistically robust forecasts with confidence intervals.

- Historical Calibration — Validate model accuracy against real team data (target: within 15% of actuals).

- Scenario Comparison — Run multiple configurations and compare projected outcomes.

Who It’s For

- VP/Directors of Engineering making strategic decisions about hiring, team structure, and priorities across 50–200+ engineers.

- Engineering Managers planning team composition and sprint capacity for 8–30 engineers.

- Technical Program Managers coordinating cross-team dependencies, timelines, and resource allocation.

Grounded in Research

Built on principles from The Mythical Man-Month, DORA metrics, and Accelerate: The Science of Lean Software and DevOps. The simulation engine models real phenomena — Brooks’s Law, communication overhead, onboarding curves, and technical debt accumulation.